azetta ai

Physics Grounded AI Research Lab.

Building state of the art AI that is interpretable, steerable, and efficient.

// OUR MISSION

AI Should Be Safe, Transparent, and Sustainable.

"People outside the field are often surprised to learn that we do not understand how our own AI creations work. This lack of understanding is unprecedented in the history of technology."

— Dario Amodei, CEO Anthropic · 2025

▶ The Black Box Problem

Today's AI is a black box running on brute force. Models grow larger every year, yet no one can explain why they hallucinate, discriminate, or fail; bias lives inside weights beyond our reach and attempts from the Frontier Labs at reverse engineering AI are extremely inefficient. Anthropic itself admits that, to fully understand models using their approaches, they would have to use much more compute than the total compute needed to train the underlying models. We belive this happens because the current frontier models are fundamentally opaque, and that a viable, scalable solution needs to go back to the foundations. Our mission is to rebuild AI so that every decision is explainable, every behaviour is correctable, and models are able to improve and scale in much more efficient and smart ways.

▶ Every Major AI Risk Traces Back to Interpretability

Alignment

click to revealHarmful AI incidents hit 233 in 2024 — +56% YoY (Stanford HAI). We cannot verify what a model is optimising for — goals appear aligned but remain uninspectable.

Hallucinations

click to revealLLMs hallucinate on 75%+ of legal queries. We can detect errors in outputs — without interpretability, we cannot stop them at the source.

Bias & Fairness

click to revealLLMs preferred white-sounding names 85% of the time in hiring simulations. EEOC's first AI bias settlement: $325K. Bias lives inside weights — beyond our reach.

Privacy Leakage

click to revealResearchers extracted PII from ChatGPT for ~$200. 5%+ of outputs are verbatim training copies. No mechanism exists to audit what a model retained.

IP Exposure

click to revealCourts hold companies liable for AI outputs (Air Canada, 2024). Without interpretability, IP exposure is unquantifiable — we cannot trace which training data shaped a model's behaviour.

Harmful Use

click to revealEU AI Act mandates human oversight for high-risk AI — non-compliance: up to 6% of global revenue. Safety filters are surface patches on opaque systems.

▶ Why This Matters

The Ceiling on Progress

Model self-improvement requires interpretability. Without it, we cannot guide models reliably or safely.

Regulated Industries Are Locked Out

Healthcare, finance, legal, and defence cannot deploy black-box AI where decisions must be audited or legally defended.

Full Automation Requires Control

You cannot delegate what you cannot inspect — and today, we cannot inspect these systems.

// OUR APPROACH

Reimagining AI Through a Physics Lens.

We believe information has its own physics. Reimagining AI through this lens reveals a fundamental mathematical structure — and building based on it gives us models that are interpretable, steerable and efficient by design.

- Interpretable

- One neuron, one concept. Full audit trail from input to output.

- Steerable

- Target specific neurons. Correct behaviour directly — no retraining.

- Efficient

- 10× fewer parameters, 90% faster training.

- Performant

- Performance matches or exceeds State of the Art.

// OUR RESEARCH

Pioneering the Field of Physics Grounded AI.

YAT KERNEL

The Yat Kernel is a physics-grounded mercer kernel that captures both alignment and proximity to create highly efficient gravity wells in representation space

ⵟ(x, w) = (x·w)² / ‖x − w‖²

Unlike dot products and cosines, the YAT kernel measures how much a weight vector acts as an attractor for an input. Each neuron bends representation space around itself — creating distinct, non-overlapping gravity wells. The result: monosemantic neurons by design, interpretability without any post-hoc approximation.

Kernel Neurons

Foundational research for Physics Grounded AI; Neural-Matter Networks outperform traditional architectures.

SLAY Attention

Linear-time YAT-powered attention mechanism that outperforms standard softmax attention.

Read paper →// OUR PRODUCTS

We Build Glass Box Models + the Tools to Understand them.

As we establish and grow the field of Physics Grounded AI, we also build products that help researchers, engineers and enterprises have access to our research findings seamlessly, safely and ready to scale.

PERIODICA

// The first MLOps platform built for interpretability

Upload any AI model — Periodica maps every neuron to a concept and lets you steer behaviour directly. No retraining. No black boxes.

Drop your model or pick a demo

PyTorch · TensorFlow · ONNX · HuggingFace

← Click a flagged neuron or use Search to probe any concept

Aether Models

// Fully interpretable SOTA models, available via API

Aether models are Physics Grounded AI in production. Fully interpretable and steerable models with State of the Art Performance with ~10X less parameters.

// WHO WE ARE

The Founding Team.

Mathematical rigour, entrepreneurial experience, and product acumen.

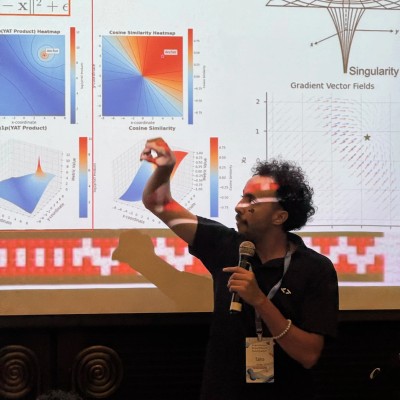

Taha Bouhsine

Co-Founder & CEO

Mathematician and architect of the Physics Grounded AI framework. Authored the core interpretability methodology now under ICML 2026 review. AI JAX Google Developer Expert, mathematician, engineer, and computer scientist.

Douglas Seo

Co-Founder & CTO

Third-time founder and lead engineer at Azetta AI. Popper CEO (Founders Inc. Cold Start); Werkflow CTO/Lead Founding Engineer ($1.5M VC backed). UC Berkeley Electrical Engineering + Computer Science.

Jose Miguel L.

Co-Founder & CPO

Ex-Apple Engineering Product Manager for AI/ML products. Founding team at YC-backed startup, leading Product, Data and Tech teams. Schwarzman Scholar and Columbia MBA + MS in AI/ML (co-author of ICML publication).

Interested? Reach out!

We are looking for researchers, engineers, investors and partners who want to build safe AI with us.

If that sounds like you, shoot us an email!